Azure Active Directory and thus any relying party on that service (such as Office 365) has two different modes for (your) custom domains that are added to it. Managed and Federated. Managed means that the authentication happens against the Azure Active Directory. The password (-hashes) of the user accounts are in Azure AD and no connection to any (on-premises) Active Directory Domain is made.

Managed domains have the advantage that you don’t require any additional infrastructure, and setting up the identities for logging on to Office 365 for example, is fairly easy. However, it does not support any Single-Sign-On which most companies do want. That is why AAD also supports Federated domains, in this case the authentication for a user happens against the corporate (on-premises) Active Directory through a service called ADFS (Active Directory Federation Services). More information on federated versus managed can be found on the Kloud blog (https://blog.kloud.com.au/2013/06/05/office-365-to-federate-or-not-to-federate-that-is-the-question/)

In this article we are going to take a look at how the federation service can be hosted in Azure (and possibly also on-premises) and what the architectures might look like.

When the majority of users are remote, placing the federation service (ADFS) on Azure (or any other cloud) makes sense. In order to logon to Office 365, PowerBI or other services, users will need internet connection anyway and the cloud is able to support highly available services. You don’t have to rely on a single Internet pipeline in your datacenter and it’s even possible to distribute the service geographically ensuring speed and uptime for your authentication service.

Usually (and in almost every picture), the ADFS is displayed as being “on-premises” but let’s see what happens if we move this to Azure IaaS:

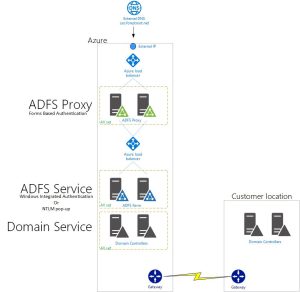

A regular deployment of ADFS consists of the ADFS proxy servers in the DMZ, the ADFS farm itself and the domain controllers that do the actual authentication validation. In Azure we can easily replicate this (note that the architecture is based on WID). Externally accessible are the ADFS Proxy services, or as they are called in Windows Server 2012 R2; Web Application Proxy services. They provide a forms-based (and SAML based) logon to the backend services, but do not provide Windows Integrated Authentication (WIA). The latter is required if we want to achieve SSO if we don’t use Windows 10 (thats a separate discussion altogether).

The SSO part of ADFS comes from the backend ADFS servers. They are capable of providing WIA based authentication through NTLM or Kerberos, but we cannot mix forms based into the equation. That is why we want external users to hit the ADFS Proxy servers (to manually provide username and password) and internal users to hit the backend ADFS servers to logon with NTLM or Kerberos to achieve SSO.

We can control the internal/external user service access through Split DNS and send the internal users over the displayed VPN tunnel. However, while the ADFS farm itself is highly redundant, the VPN connection is now the single point of failure.

There are multiple solutions to fix this:

- Implement redundant VPN’s

- Implement an ADFS on-premises which is part of the farm

- Implement two Azure sites and two VPN’s and add load balancers

Redundant VPN’s

Azure ARM Gateways now support multiple connections with a priority. http://www.gi-architects.co.uk/2015/12/azure-highly-available-dynamic-vpn-automatic-failover/

BGP is now also supported: https://azure.microsoft.com/en-us/documentation/articles/vpn-gateway-bgp-resource-manager-ps/

While we can make the connections redundant, there is always a single point of failure in this case. All services are still running on a single VNET inside 1 datacenter, which does provide 99.95% uptime for the VM’s in their Availability Set, but no SLA is available for the redundant VPN connections.

ADFS On-Premises

When installing an ADFS on-premises they can be a member of the same farm (if using WID). This allows external users to connect to Azure (ADFS Proxy) only, being served the forms-based logon, while corporate network users are redirected (through split DNS) to the on-premises ADFS (through a load balancer) farm. When using a load balancer (recommended) it is even possible to point to the local AND the Azure ADFS server:

Two Azure Sites

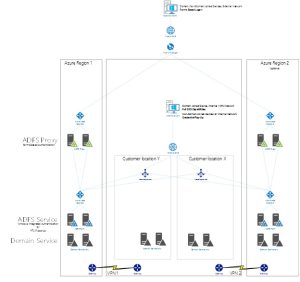

Another way could be to architect with two Azure sites in mind. This allows for the service to be geographically distributed for external users, but also for internal users;

In the architecture above, a centralized load balancer in the customer network has two service end-points to connect to (or alternatively points directly to the servers in Azure rather than the Azure Load Balancer). Internal users can be distributed between the two sites and each site has its own VPN connection. Which could come from two different routers/internet connections. External users are load balanced between the sites through Traffic Manager. Each site still has a set of every VM as the uptime guarantees from Azure are ONLY applicable when two (or more) VM’s are in an Availability Set.

(Note that again, ADFS is using WID, not SQL)

Multiple Source locations

While the above picture works perfect for single location companies, it does not work well for multiple office locations. It would make more sense to connect to a more local resource than a single load balancer far away to get to the closest Azure location (or the 2nd location on failover).

The problem with multiple locations for the service is that we now need to have multiple A records for the service, each pointing to an internal load balancer. This can be solved in multiple ways:

- Subnet Prioritization (netmask ordering)

- Windows Server 2016 DNS Policies

- Cisco (or other) Global Site Selector services

We will dive into these options a little later, but one might ask: If the DNS already needs to provide multiple A records, why not discard the internal load balancer altogether? This would be possible in only a few circumstances; the load balancer in this case provides service health monitoring when redirecting the client to either Azure Region 1 or Region 2. It is the fault detection in the architecture for not just the servers in Azure but also the VPN connection itself. Only using DNS policies or Subnet Prioritization will not provide this fault detection and may redirect a user to an unavailable service (due to VPN outage or other outages).

Subnet Affinity

DNS servers in Windows 2000 (and onwards) provide what’s called Subnet Prioritization; in short this means that IF the IP address of a requesting client matches an A record /24 subnet, the DNS server will provide this A record back.

Okay, that probably needs some more explaining;

Say we have multiple A records:

Sso.forestroot.net 172.16.0.10

Sso.forestroot.net 192.168.0.213

Sso.forestroot.net 172.16.10.10

If a client with its own IP address on 10.0.0.1 would request sso.forestroot.net, he would get one record back in various order (round robin) as there is no specific A record available for 10.0.0.0/24.

With Subnet Prioritization enabled, if a client with 192.168.0.10 would request sso.forestroot.net, the client would always receive 192.168.0.213 back.

By default, the DNS server does this based on a /24 subnet, but it is possible to extend this to a /16 or even /8 subnet architecture (https://support.microsoft.com/en-us/kb/842197).

When the IP addressing scheme of your company allows for it, you could use this technology to control the traffic to your (geo) distributed services, so that users are always redirected to their closest service entry point.

SideNote: When designing subnets for Azure services, you could add a small part of your on-premises datacenter IP range into a single VNET (a VNET can host multiple address spaces and subnets) so that you could take advantage of this prioritization, without re-IP’ing your entire network. For example,

say my 1st office location (SITE1) uses 172.16.0.0/16, my second office location uses 192.168.0.0/16

For Azure VNET’s I reserved 10.0.0.0/8 and gave 10.0.64.0/16 to Azure Region 1, and 10.1.0.0/16 to Azure Region 2.

I want my 1st Office location to always go to Azure Site 1 and the second office location to Azure site 2, BUT.. I want to use the same service URL’s for both locations.

I could splice off 172.16.100.0/24 and put it also into my Azure VNET in Azure Region 1. Now I can create a load balancer on 172.16.100.0/24 and still point it to an Azure VM in the 10.1.0.0/16 network on that Azure location.

I would also spice off 192.168.x.0/24 and put it in my Azure VNET in Region 2.

I now can use Subnet Prioritization for services so they always go to my nearest Azure location based on multiple A records.

Windows Server 2016 DNS policies

If your network however is highly distributed and you have Address Ranges mixed everywhere, relying on the subnet prioritization might be cumbersome. Instead you might want to take a look at the Windows Server 2016 DNS policies. With this new feature you can control what response is given back to a client depending on their subnet. With these policies you can instruct the DNS server to form the reply based on the request, for example;

We still have the multiple records

Sso.forestroot.net 172.16.0.10

Sso.forestroot.net 192.168.0.213

Sso.forestroot.net 172.16.10.10

Say that we now want a client with IP address 10.0.1.10 to always receive the first and last record (172 range). With DNS policies we define a subnet (eg 10.0.0.0/24) and we define in the policy that only 172.16.10.10 and 172.16.0.10 are to be given back when a query comes in.

SideNote; you don’t have to host your entire domain on these new DNS services if you are too scared to do so already. It is even possible to create a DNS delegation from your primary DNS servers towards the 2016 DNS services. In the above example, I host forestroot.net on the domain controllers, but delegate sso.forestroot.net as a zone to the 2016 DNS servers. inside the 2016 DNS servers themselves I can create multiple root A records (@) for the zone pointing to the different IP addresses.

(Cisco) Global Site Selector

To be honest, I’m not a big Cisco fan when it comes to Firewalls and such, not that they are bad, but I prefer other brands due to the interfaces and controls. But one thing is really cool, it’s the Global Site Selector series. It’s basically the 2016 DNS server policies in an appliance. It too can control what is answered on a query for a particular domain name. But not only does it just provide a query back, it also checks if the site is up or not. Basically it’s the Azure Traffic Manager, but then for internal records (yes it can do external too, but Traffic Manager is usually cheaper for my setups).

More info

In order to deploy ADFS Proxy services using Traffic Manager, please see the following guides:

https://www.linkedin.com/pulse/azure-traffic-manager-adfs-kyle-green

To deploy Domain Controllers on Azure

Once a VPN is up and running it is time for the first domain controller to be promoted on Azure. Before doing this however, make sure to add the subnet in sites and services and create a new site for your Azure regions (region 1 and region 2 in the example) (https://blogs.technet.microsoft.com/canitpro/2015/03/03/step-by-step-setting-up-active-directory-sites-subnets-site-links/)

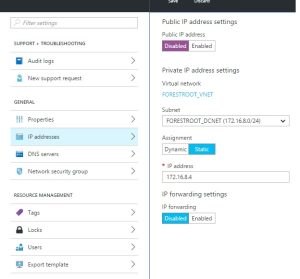

Next, deploy a new Virtual Machine (Windows Server 2012 R2 Datacenter) from the library and make sure to attach it to the correct network and put it in a newly created availability set (which you can create during the creation of the virtual machine). After it has been fully deployed, add a new data disk to the machine without any caching and configure it to be the F drive. In order to configure the network settings like IP address and DNS, never configure that from within the machine, but instead use the Network Interfaces option in the machine properties in the portal.

Configure the DNS, set a static IP to point to the existing domain controller on-premises (I have removed the public IP address for the server so that the domain controller is not reachable from the internet), reboot it, and promote the machine to a domain controller while placing the NTDS and SYSVOL directories to the (newly created) F drive.

After that, perform the same for the 2nd domain controller, but instead of configuring the DNS settings per machine, you can now configure the VNET DNS settings to point to the fixed IP address of the first domain controller and add the second IP address for the 2nd domain controller too.